In thirty minutes, I built something I hadn't planned for and it turned out to be more useful than what I was trying to solve!

The brief that kept changing

Over the past two years, I've run around thirty immersion tests. The format is always the same: 2 hours, on-site, in the open space with the team. The candidate works on a brief, asks questions eventually and presents to explain their choices.

The problem is that the brief can't be identical for everyone. Each candidate comes in with a different profile, a different expertise, our context evolves and if I want the exercise to mean something, I have to adapt it. Which means: updating the assignment, briefing the people attending the interview, warning the team in case the candidate asks them questions during the 2 hours.

It's time-consuming. It creates a defocus. And at the end of the day, it doesn't really solve what I'm actually trying to evaluate: risk-taking, analytical rigour, the way someone navigates uncertainty. Because when a candidate doesn't ask questions, the exercise becomes much harder to interpret. And that happens, a lot more often than you'd think.

After about twenty processes, I told myself something had to change.

Thirty minutes and a craving

Honestly, this didn't start with a spec. It started with a craving actually.

A day packed with meetings, thirty minutes in front of me between my lunch and next meeting, and the desire to do something that felt alive: bring Growth and market Finance together in an interactive interface. Two subjects I'm deeply interested in; not for a specific business reason at that moment, just for the pleasure of making them coexist. And an excuse to re-test Lovable.

I'd used Lovable before and hit the usual wall: when the tool doesn't understand your mental model, iteration becomes friction. This time, I structured everything upstream in ChatGPT which has my professional context and knows about my interest in market finance; breaking the build into progressive prompts, each adding complexity without creating bugs or confusion.

In under an hour, I got:

- 12 foundational prompts.

- 4 or 5 patch prompts while testing.

- A functional and interactive web app that the whole team could try.

The moment it became something else

A few minutes before my 1-to-1 with Max my manager, I ran through the whole app end to end just to make sure I was sharing something that actually worked. And somewhere in that process, in that moment of putting myself in a user's shoes, it clicked: we could use this with our own data. There was something here beyond the recruiting use case.

To understand why, you need to know what the app actually does:

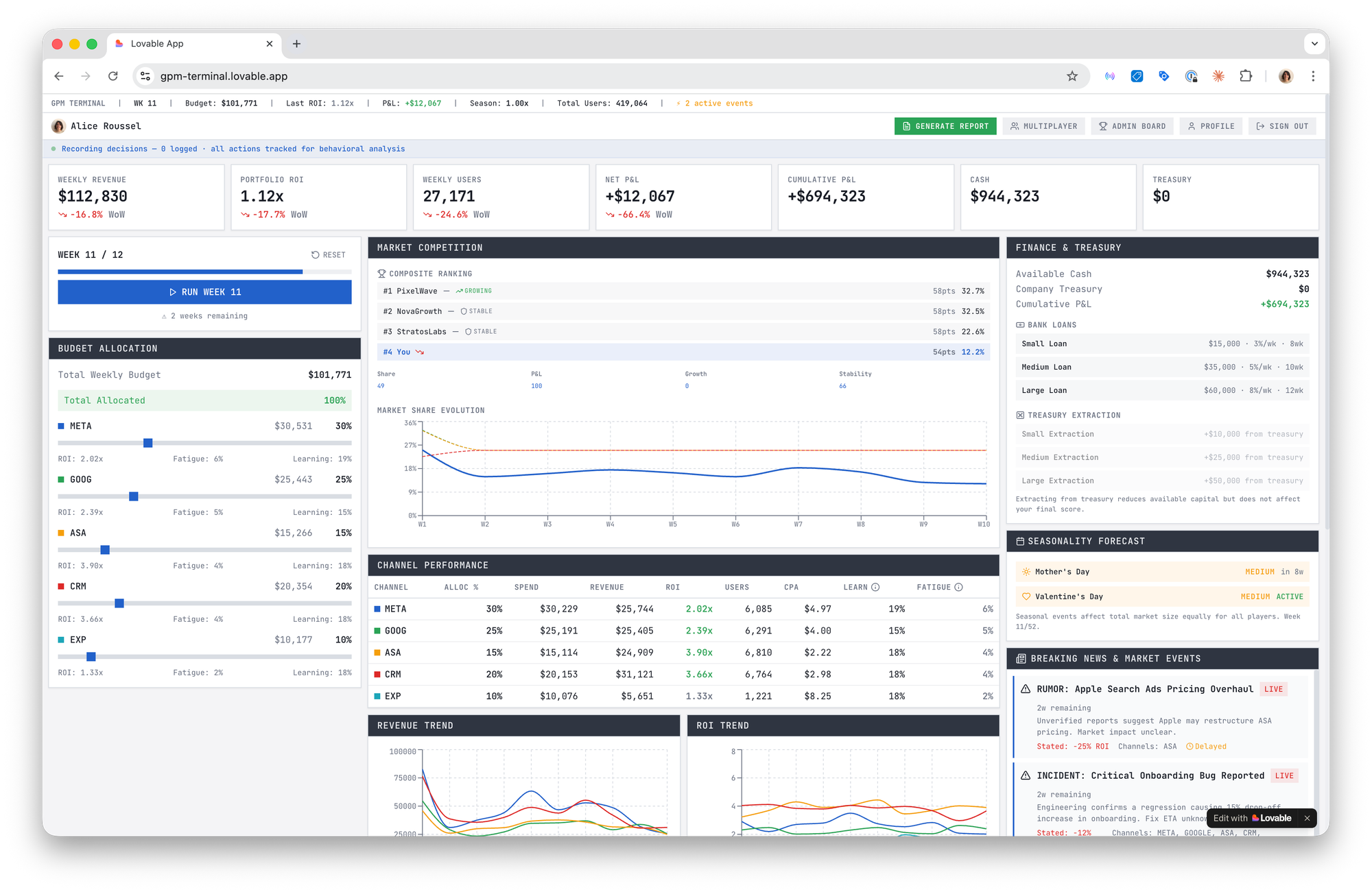

- GPM Terminal opens on a dark screen. Dense typography. Minimal interface. The aesthetic of a Bloomberg terminal. At the top: cash available, marketing profit, net growth score. Week number.

- From there, you make decisions. You adjust your weekly spend with a slider: increase aggressively, pull back to preserve cash, hold steady. You choose your optimization logic: Volume or Marketing Profit. You activate AB tests (creative, landing, pricing, channel expansion...) each with a cost, a probability of success, and a delayed impact. Some decisions pay off weeks later. Some never do.

- What makes it alive is the uncertainty. Every week, a market signal appears; a rumour about a competitor doubling their Meta investment, a softening in organic demand. But the signal might be exaggerated. It might be partially false. You never have full certainty. And the competitive landscape stays deliberately opaque: no exact spend figures, no clear strategy but just "auction competitiveness rising" and your own inference.

- After 12 weeks, the game ends with a diagnosis such as "You scaled too fast relative to cash resilience." or "Your portfolio of tests reduced long-term CAC." so you can see a trajectory.

And that's exactly what I'd been trying to evaluate in immersion tests all along, not whether a candidate knows the right answer, but how they reason under incomplete information. How they weigh short-term pressure against long-term optionality. Whether they react to every signal or know when to hold.

GPM Terminal doesn't just simulate a business environment. It surfaces how someone thinks. This is what I was thinking about when I walked into that 1-to-1. Not a recruiting tool anymore but a thinking tool.

Max's reaction was immediate. This year, broader AI adoption has been a company-wide priority: the idea that we should collectively get better at using these tools, not just individually. So when he saw the app, it landed. We only had a few minutes at the end of the 1-to-1, but he tested it on his own that same day and followed up on Slack. No nudge needed. No demo replay. He just went and played.

That's cool because you don't have to sit through an explanation; you just interact and the reasoning becomes self-evident.

His message? He told me he'd gotten told off by the fictional manager I'd built into the game. That's my touch; a sassy, sharp-tongued character I love adding to these kinds of tools. When I saw his Slack message I laughed, and I was genuinely happy he'd taken the time to test it the same day. But beyond the fun of it, his spontaneous engagement proved something: people don't need to be convinced to use a tool that creates pull.

Give GPM Terminal a try, solo or in multiplayer mode.

The real unlock

There's a piece by Elena Verna that's been on my mind lately. She describes a new kind of employee: AI-native, unburdened by legacy processes and approval chains who sees a problem and starts building. Ownership, autonomy, and velocity as a moat. And a hidden superpower: when you can move this fast, the cost of failure plummets. More bold bets. Less analysis paralysis. A real advantage in learning loops.

I'm not fresh out of school. But I recognized something in that description.

What struck me most when testing the app end to end is how easy it is to make invisible reasoning visible. The trade-offs I juggle with every day: seasonality, audience fatigue, cash constraints, delayed test impact, exogenous shocks... usually live in my head. In a meeting for instance, they get debated in fragments but in an interactive system, they become tangible. You move a slider and feel the consequence three weeks later. You react to a rumor and see how fragile your runway actually is. It's not a better explanation. It's a translation of how you think.

That's the real unlock for someone who wants more strategic impact: not a more sophisticated attribution model but a tool that makes your mental model legible to the rest of the company. Something that translates the way you think into something anyone can interact with.

You don't need to wait for someone to ask for it. You don't need budget, or a dev, or a roadmap. You just need thirty minutes and the willingness to build something for the pleasure of it first.

The use case will find itself 🤌

Comments ()